A new Federal Trade Commission staff report that examines the data collection and use practices of major social media and video streaming services shows they engaged in vast surveillance of consumers in order to monetize their personal information while failing to adequately protect users online, especially children and teens.

The staff report is based on responses to 6(b) orders issued in December 2020 to nine companies including some of the largest social media and video streaming services: Amazon.com, Inc., which owns the gaming platform Twitch; Facebook, Inc. (now Meta Platforms, Inc.); YouTube LLC; Twitter, Inc. (now X Corp.); Snap Inc.; ByteDance Ltd., which owns the video-sharing platform TikTok; Discord Inc.; Reddit, Inc.; and WhatsApp Inc.

The orders asked for information about how the companies collect, track and use personal and demographic information, how they determine which ads and other content are shown to consumers, whether and how they apply algorithms or data analytics to personal and demographic information, and how their practices impact children and teens.

“The report lays out how social media and video streaming companies harvest an enormous amount of Americans’ personal data and monetize it to the tune of billions of dollars a year,” said FTC Chair Lina M. Khan. “While lucrative for the companies, these surveillance practices can endanger people’s privacy, threaten their freedoms, and expose them to a host of harms, from identify theft to stalking. Several firms’ failure to adequately protect kids and teens online is especially troubling. The Report’s findings are timely, particularly as state and federal policymakers consider legislation to protect people from abusive data practices.”

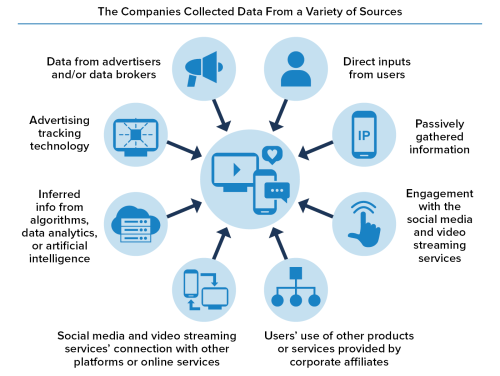

The report found that the companies collected and could indefinitely retain troves of data, including information from data brokers, and about both users and non-users of their platforms. The staff report further highlights that many companies engaged in broad data sharing that raises serious concerns regarding the adequacy of the companies’ data handling controls and oversight. In particular, the staff report noted that the companies’ data collection, minimization and retention practices were “woefully inadequate.” In addition, the staff report found that some companies did not delete all user data in response to user deletion requests.

The staff report also found that the business models of many of the companies incentivized mass collection of user data to monetize, especially through targeted advertising, which accounts for most of their revenue. It further noted that those incentives were in tension with user privacy, and therefore posed risks to users’ privacy. Notably, the report found that some companies deployed privacy-invasive tracking technologies, such as pixels, to facilitate advertising to users based on preferences and interests.

Additionally, the staff report highlighted the many ways in which the companies fed users’ and non-users’ personal information into their automated systems, including for use by their algorithms, data analytics, and AI. The report found that users and non-users had little or no way to opt out of how their data was used by these automated systems, and that there were differing, inconsistent, and inadequate approaches to monitoring and testing the use of automated systems.

Furthermore, the staff report concluded that the social media and video streaming services didn’t adequately protect children and teens on their sites. The report cited research that found social media and digital technology contributed to negative mental health impacts on young users.

Based on the data collected, the staff report said many companies assert that there are no children on their platforms because their services were not directed to children or did not allow children to create accounts. The staff report noted that this was an apparent attempt to avoid liability under the Children’s Online Privacy Protection Act Rule. The staff report found that the social media and video streaming services often treated teens the same as adult users, with most companies allowing teens on their platforms with no account restrictions.

The report also noted some of the potential competition implications of the companies’ data practices. It noted that companies that amass significant amounts of user data may be in a position to achieve market dominance, which may lead to harmful practices with companies prioritizing acquiring data at the expense of user privacy. It noted that when there is limited competition among social media and video streaming services, consumers will have limited choices.

The staff report makes recommendations to policymakers and companies based on staff’s observations, findings, and analysis, including:

- Congress should pass comprehensive federal privacy legislation to limit surveillance, address baseline protections, and grant consumers data rights;

- Companies should limit data collection, implement concrete and enforceable data minimization and retention policies, limit data sharing with third parties and affiliates, delete consumer data when it is no longer needed, and adopt consumer-friendly privacy policies that are clear, simple, and easily understood;

- Companies should not collect sensitive information through privacy-invasive ad tracking technologies;

- Companies should carefully examine their policies and practices regarding ad targeting based on sensitive categories;

- Companies should address the lack of user control over how their data is used by systems as well as the lack of transparency regarding how such systems are used, and also should implement more stringent testing and monitoring standards for such systems; Companies should not ignore the reality that there are child users on their platforms and should treat COPPA as representing the minimum requirements and provide additional safety measures for children;

- The Companies should recognize teens are not adults and provide them greater privacy protections; and

- Congress should pass federal privacy legislation to fill the gap in privacy protections provided by COPPA for teens over the age of 13.

The Commission voted 5-0 to issue the staff report. Chair Khan, as well as Commissioners Alvaro Bedoya, Melissa Holyoak and Andrew N. Ferguson each released separate statements.

The lead attorneys on this matter are Jacqueline Ford, Ronnie Solomon and Ryan Mehm from the FTC’s Bureau of Consumer Protection.